Context Engineering: Why Feeding AI the Right Context Matters

In the rush to access the latest AI models and capabilities, we’re overlooking something fundamental: how we organize and present information to these tools often matters more than the model itself.

I discovered this truth through my own frustrating attempts to get consistent, high-quality results from AI tools. Around that same time, I came across a post by technologist Imran Peerbhai in which he used the term ‘context engineering’, language that echoed a discipline I’d already been practicing across product and system design. His framing helped surface something I’d long observed: that the way we structure and manage context for AI is often more important than the model itself. That insight reinforced my belief that engineering for context isn’t just a prompt strategy, it’s a foundational discipline for modern product systems.

The more I explored this idea using Gemini Deep Research, the clearer it became: the gap between mediocre and meaningful AI results often comes down to how deliberately we structure and provide context (1). The most advanced AI model in the world can’t make up for poor or missing context.

This exploration has fundamentally changed my approach to AI implementation. Instead of chasing the latest models, I now focus on how to prepare and structure context for the tools we already have. The evidence suggests this shift in focus — from raw AI capability to context engineering — could unlock significantly more value from existing AI investments.

What is Context Engineering?

Context engineering is the systematic process of designing and implementing systems that capture, store, and retrieve context for AI tools. Research shows it focuses on creating comprehensive frameworks that make AI outputs more relevant and valuable for specific situations (3). Whether working with enterprise AI systems or consumer AI tools, the quality of context provided shapes the quality of results.

Current Approaches to Context Engineering

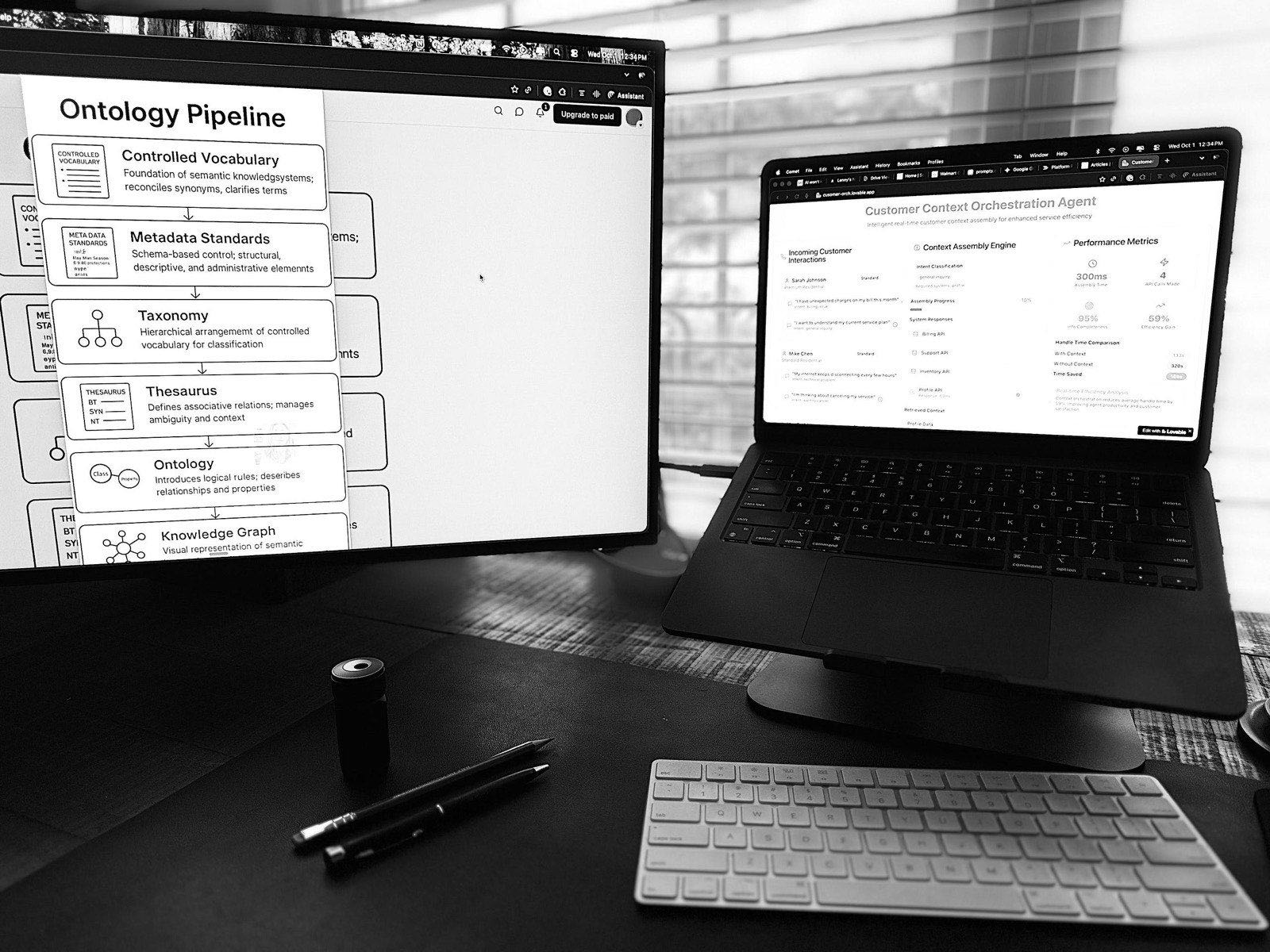

As we begin 2025, there are four main approaches for enhancing AI with context (2):

- Retrieval Augmented Generation (RAG): Platforms like Perplexity demonstrate RAG’s effectiveness by connecting AI to external data sources in real time. By organizing research into thematic Spaces, users can retrieve relevant information quickly, ensuring that AI responses incorporate the most up-to-date data available.

- Prompt Engineering: Crafting structured, step-by-step prompts is central to helping AI better interpret and respond to complex tasks. For instance, tools like Claude’s Projects feature allow users to group related prompts and context together, creating a structured environment where the AI can deliver more consistent and contextually aware results over the course of a task. People can continue to work and change the context in the project over time, ensuring the AI adapts to evolving needs within a single structured workspace. This approach illustrates how prompt engineering benefits from maintaining clarity and continuity in conversations.

- Adaptive Prompting: ChatGPT’s Memory feature showcases adaptive prompting in action, retaining key details from previous conversations to refine and personalize subsequent responses. This iterative approach helps the AI provide increasingly relevant outputs as the conversation progresses.

- Contextual Language Models (CLMs): In healthcare, PubMedBERT demonstrates how domain-specific CLMs can assist researchers in understanding complex biomedical texts. Similarly, academic tools like Elicit use specialized training to help researchers summarize papers, find relevant research, and generate new hypotheses, showing how CLMs can make AI outputs more relevant for specific applications. These models shine when navigating complex, domain-specific language or providing specialized recommendations.

The Context Engineering Framework

These current approaches show how AI tools use context, but implementing them effectively requires a structured way of thinking about context itself. Success with any of these approaches — whether you’re using RAG, prompt engineering, or other methods — depends on having a solid foundation for managing context.

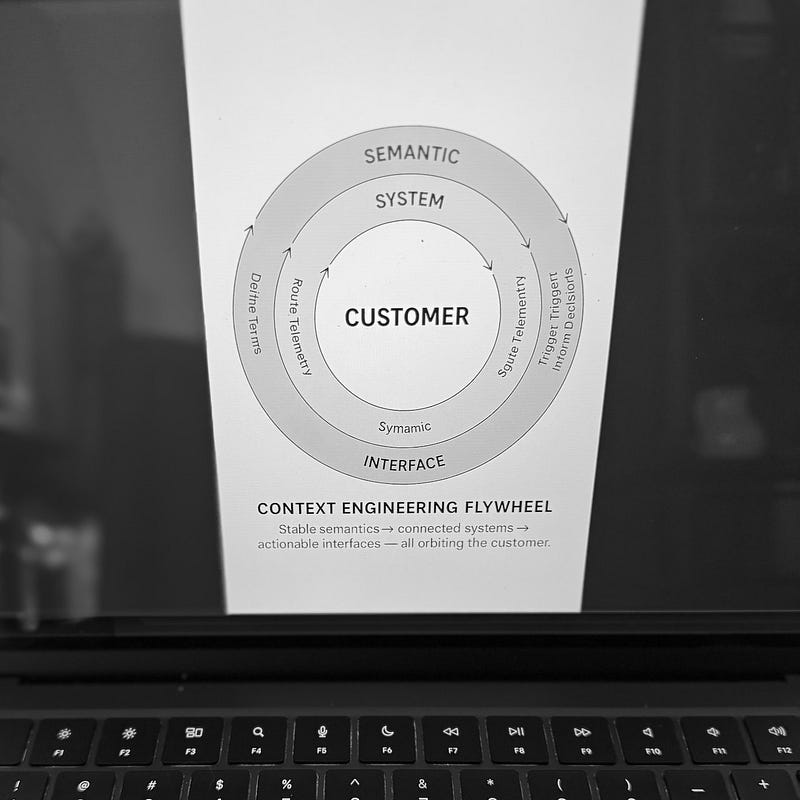

Making context engineering work comes down to three key elements (3):

• Knowledge Architecture: The foundation of context engineering. This involves organizing critical information systematically, identifying key relationships between different pieces of information, and documenting how decisions are typically made.

• Integration Systems: The bridges between information and AI tools. These systems maintain and structure context in ways that AI can process effectively, ensuring consistency across different applications and use cases.

• Implementation Strategy: The roadmap for success. This addresses how contextualized AI solutions are deployed and measured, including tracking effectiveness and refining the approach based on results.

Example: For this article, I began by using Gemini Deep Research to gather insights on how different industries approach context engineering. This step created a structured knowledge base (Knowledge Architecture). Next, I organized my work in Claude’s Projects to maintain context across multiple conversations. This ensured consistency and continuity (Integration Systems). Finally, I tested these insights by writing this article, validating the framework through practical application (Implementation Strategy).

Getting Started with Context Engineering

You can begin exploring context engineering today with tools you might already use. Enable ChatGPT’s Memory feature to maintain context across conversations. Create a Project in Claude and upload relevant documents to provide context. Use Perplexity’s Spaces to organize and retrieve research materials. Each of these features demonstrates context engineering principles in action, helping you see firsthand how context improves AI outputs.

Key Questions in Context Engineering

Even with advanced tools like Gemini Deep Research, it became clear that effective context engineering is less about the technology itself and more about the preparation. The key questions remain consistent:

- What context do your AI tools need to deliver better results?

- How can you maintain context effectively across conversations?

- How do you know when your context engineering is working?

These questions matter whether you’re using AI for customer support, research, writing, or any other task. You’ll find answers by trying different approaches and seeing what works best for your specific needs.

References:

- “Why Context is Crucial for Effective Generative AI” — qBotica

- “Contextual AI: The Next Frontier of Artificial Intelligence” — Adobe Experience Cloud