Context Engineering, One Year Later

I was in a conversation after an AI & Product meetup the other night talking about AI and reliability and how hard it is to go beyond “96%-97%” accuracy. This struck me as really high for a probabilistic system (and far higher than my own efforts that I would rate at closer to 80%). When I asked how they achieved this, they said “context engineering.”

About a year ago, I wrote an article trying to articulate why the way you organize information for AI matters more than which model you’re using when chatting with Claude or ChatGPT. I called it “context engineering”, a term I came across on a random LinkedIn post that fit the point I was trying to make about getting better results from AI by providing the right context. I had zero idea how quickly that idea would go from a simple observation to a systematic approach before the year was out.

Context in January 2025

In the article back in January, I was trying to convey that how we organize and present information to AI often matters more than the model itself. People were getting dramatically better results from the exact same AI tools based on how they deliberately structured the information they were feeding the conversation.

At the time there were new methods being used to do this. Now familiar approaches like RAG (Retrieval Augmented Generation) and Prompt Engineering were already well established, and the AI companies were building features into their systems to capture and remember context more easily such as Claude’s Projects, ChatGPT’s Memory feature, Perplexity’s Spaces. But the idea that giving the model better context and data might matter more than training the model to be smarter was still counterintuitive to most people as we waited for the next model release to just “know” what we wanted to talk about.

When Everyone Started Talking About It

By mid-2025, the term took off. Tobi Lutke started talking about it. Andrej Karpathy picked it up. Suddenly everyone was talking about context engineering. But I didn’t agree with those narrow definitions that were treating it like a purely technical practice (RAG pipelines, prompt optimization, retrieval systems). Context engineering was always bigger than that to me.

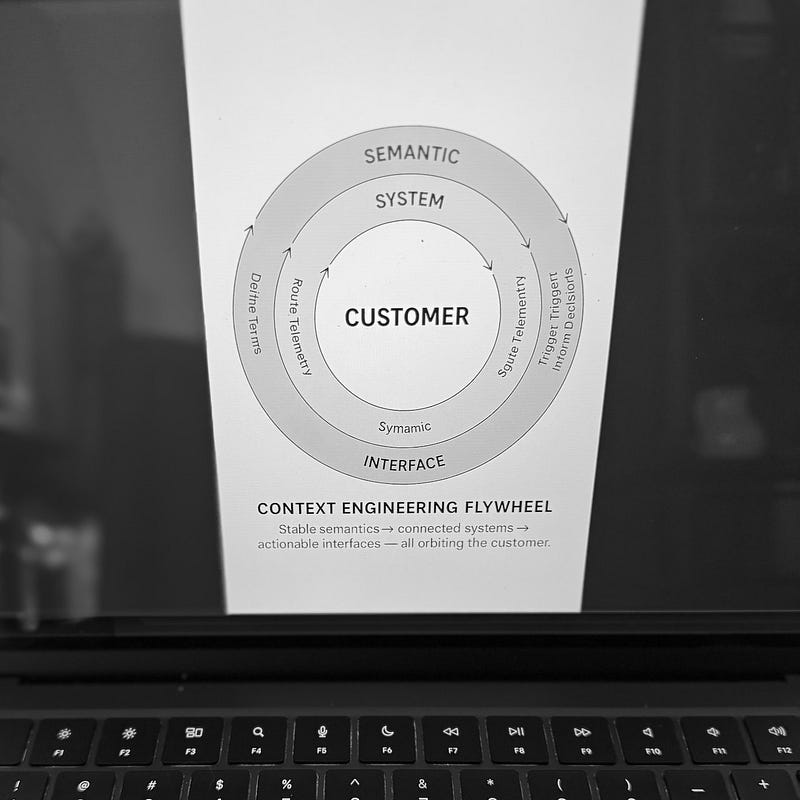

So I wrote a follow-up in July trying to widen the conversation. Context engineering has been around way longer than AI. Historians do it when they interpret primary sources, building the frame that lets a 300-year-old document make sense to modern readers. Lawyers do it when they apply precedent to new cases. Because AI is not just responding to our inputs, but providing detail based on its massive training, figuring out how to maintain meaning when information leaves its original context becomes the real challenge to making AI conversations truly “useful”.

What I didn’t expect was how quickly people would develop the technology to help solve this problem.

Plumbing Context into AI

Andrej Karpathy (former AI lead at Tesla, cofounder of OpenAI) has a useful way of thinking about this. He describes anything baked into the model’s training as “a hazy recollection of what you read a year ago.” But anything you put in the context window is “directly in working memory.” It’s the difference between vaguely remembering something versus having it open right in front of you.

That distinction matters because of scale. As Nate B Jones points out, when an AI agent goes to work on a real task, your original prompt might be 0.1% of what it’s actually processing. The rest is context pulled in from documents, databases, APIs, memory systems. The infrastructure that grew up around context engineering is basically about managing that other 99.9%.

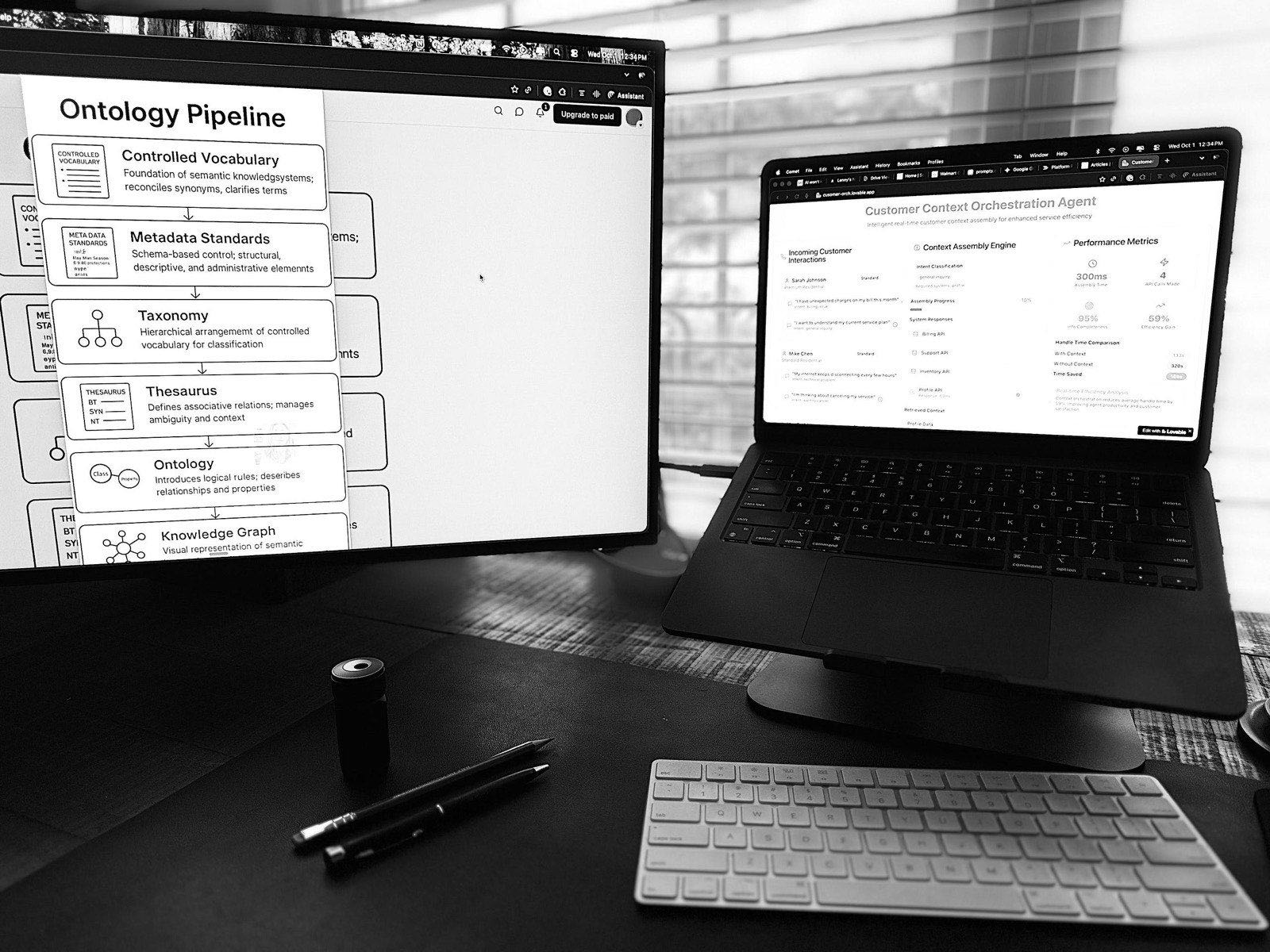

Two pieces of infrastructure emerged to handle this. The first is connectivity: how do you connect AI to where your data actually lives? One approach that got traction is the Model Context Protocol (MCP), which Anthropic launched in late 2024 and OpenAI and Google adopted by late 2025. People call it the “USB-C of AI,” a standardized connector so everything can talk to everything. But it’s not the only approach. Some practitioners prefer simpler methods like CLI tools, which can be more efficient depending on the use case.

The second is assembly. RAG started as “find relevant documents and add them to the prompt.” Now it’s more like a research assistant that gathers what you need from multiple places before you even ask the question. It looks at what you’re trying to do, searches across documents and databases and live data, figures out what’s actually relevant, and organizes it so the model can process it efficiently. By the time your query reaches the model, the context is already curated.

This infrastructure is why context engineering won out over fine-tuning for most knowledge work. When your information changes (and it always does), updating the context layer is immediate. Retraining a model takes weeks to months, plus testing, plus deployment. Companies figured out it’s far cheaper to keep the model general and make the context specific.

None Of This Matters Without Knowledge

Jessica Talisman, an information architect who’s been writing extensively about this intersection, directly points out that what AI people are calling “context engineering” is what knowledge architects and librarians have been doing for decades.

Jessica writes about process knowledge (tacit, living in people’s heads, scattered across Slack channels and institutional memory) versus procedural knowledge (formalized, encoded, machine-readable). Context engineering, from her perspective, is basically the act of turning process into procedure. And that work is hard. As she puts it, “data does not self-organize and describe itself, no matter how much pixie dust is distributed.”

Context engineering infrastructure depends on the right context. MCP can connect AI to your data sources, RAG can assemble context from multiple places, but none of that helps if the context you needed was never captured in the first place. Implicit operational information like payment terms, vendor preferences, exception handling (the things that human employees just know or can ask about) needs to be formalized before any system can use it.

It’s Not About the Prompt

I still see people getting “Prompt Engineering” certificates and countless posts about “Top Prompts for X”. Prompting absolutely matters, and getting better at it helps, but people who get consistently good results are also thinking about context. What background does this specific task actually need? What information is relevant? And just as important: what’s noise?

Going back to Karpathy’s framing — if context is working memory, then cluttering it with irrelevant stuff makes it harder to think clearly. The model’s training doesn’t change, but its ability to apply that training depends on what it’s paying attention to. Noise competes for attention. I think of it like briefing a colleague before handing them a task — I wouldn’t point them at the shared drive and say “good luck.”

Is 99.999% The Right Goal?

Back to that conversation at the meetup. When they said 96–97% accuracy, my first thought was that’s remarkably high for a probabilistic system. My second thought was, how the heck are you going to close that last 3%?

These models just aren’t built for 99.999% reliability. That thinking implies determinism (same input, same output every time), and LLMs are fundamentally not deterministic. Context engineering can get you closer to the right answer in fewer turns with the AI, and it can reduce hallucinations by giving the model real information to work with instead of asking it to guess. But it just can’t deliver high reliability. The way it’s built doesn’t support that.

Which is why human’s aren’t going away. These tools can draft, synthesize, and surface relevant information, but they can’t be held accountable for being wrong. For high-stakes decisions, you still need a human in the loop who can verify the output and own the result. Human partnership is the model for how AI integrates into business workflows, not a stopgap until the technology gets better.

Agents Make Context Essential

The shift from generative AI to agentic AI changes the stakes. When AI is drafting text, wrong context means you get a bad first draft. When AI is executing a workflow, wrong context means it takes the wrong action. An agent needs to know what it’s allowed to do, what the constraints are, what the business rules are. All of that is context. And if it’s not there, you’re hoping the agent figures it out on its own.

Agentic AI makes the upfront knowledge work the critical path to safely allowing AI any kind of autonomy. The implicit operational stuff that human employees just know (escalation paths, exception handling, which rules are actually rules versus which ones bend) has to be captured and organized so the agent can make safer, more “correct” decisions, and know when to bring it back to a human instead of trying to figure it out itself.