The Partnership Matrix: How Humans and AI Can Work Together

I spent a recent Sunday morning reading through competing visions for AI’s future. A New Yorker article (“Two Paths for AI”) investigated two views of current thinking: “AI 2027,” which presents a provocative, almost catastrophic view of the next decade, and “AI as Normal Technology,” a pragmatic perspective that sees AI as another technology finding its place in our world.

As I explored each position, two words kept shouting in my head: judgment and accountability. These contrasting positions on the future of AI hinged on: Who is empowered to make the decision, and ultimately who is accountable for it?

I think the pragmatists are closer to right. AI and its infrastructure will become familiar, because humans adapt to every new technology over time. But AI does something very different than its predecessors: it generates responses that feel like thinking. Because it’s so convincing in its mimicry of human reasoning and conversation, it is super tempting to look at AI as a replacement for cognitive thinking.

There’s real pressure to offload decision-making across every corner of our lives. Just last night I was joking with neighbors about how it’s always stressful shopping at the grocery store because of the decision overload. The promise of removing stress and minutia is deeply appealing. But as Ethan Mollick describes it, we’re working with an “alien intelligence” that doesn’t process information or reach conclusions the way humans do. Simply handing over our decision-making to the machine will fail more often than not. But we shouldn’t look at it as a binary question (does AI do this or do I). Instead, think of how an intentional partnership between humans and AI could look, understanding what it can contribute to decisions while maintaining human judgment where it is necessary. Every time we decide how to work with AI, we’re making a choice about how human and machine intelligence can best complement each other, so let’s push harder and define what that relationship should look like.

I’m convinced this is the most important question right now for anyone integrating AI. How do we design AI–human partnerships that leverage both human judgment and machine capabilities, with intention and thoughtful deliberation as to who is ultimately accountable for what the AI does and how we use it?

Introducing the AI Partnership Matrix

The current mindset for teams and businesses seems to be “can AI do this for me?” They test models, measure accuracy, run pilots. It’s a natural “try before you buy” instinct, but it keeps AI isolated, or applied to “tasks” or easily repeatable work. But AI is a form of intelligence, not just automation. Treating it like a workflow tool is missing the value that comes from enhancing critical thinking when paired with human intelligence.

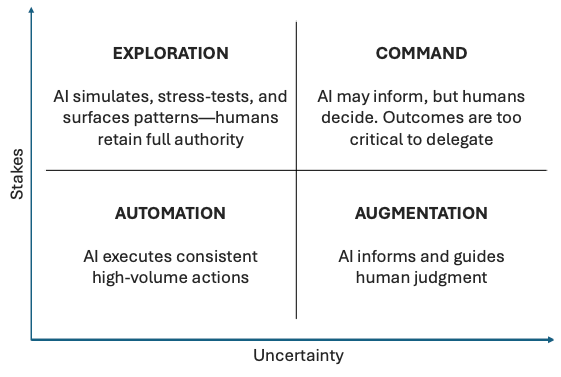

Opening this door also leads to a lot of questions, mostly focused on defining the right working relationship between humans and AI. To do that, we need a clearer way to describe the work itself, so we can define how both sides should partner on it. Most frameworks focus on what AI can do; this one helps leaders decide how humans and AI should work together. For this exercise, let’s start by breaking the work down along two dimensions:

- Stakes: What’s the impact if we get this wrong?

- Uncertainty: How clear are the inputs and criteria for this task?

These two dimensions in a 2×2 grid give us four zones for decision types, each suggesting a different model for AI–human collaboration. While models like the Cynefin framework or levels of automation focus on complexity or control, this matrix frames collaboration through the lens of decision quality and consequence, what matters in modern AI–product leadership.

The Four Zones

Automation Zone (Low Stakes, Low Uncertainty): Clear rules, minimal error impact, consistent patterns. Things like customer service routing, expense categorization, and swivel-chair data input across disconnected systems are some good examples. When these go wrong, it’s annoying but the risk is super low. The criteria are often clear enough that AI handles them reliably, freeing humans for more complex work.

Augmentation Zone (Low Stakes, High Uncertainty): Inputs are fuzzy, but errors aren’t catastrophic. Activities like brainstorming, market research, and early ideation fit here. In this space, AI works as a thought partner, helping surface patterns we might miss while humans provide creative direction and context.

Exploration Zone (High Stakes, Low Uncertainty): Clear parameters but significant consequences. Think of work like pricing optimization, inventory forecasting, risk assessment in this zone. Rules are definable and partially automatable, but mistakes cost real money or impact real lives. AI models scenarios; humans validate edge cases in a true collaborative dance.

Command Zone (High Stakes, High Uncertainty): High stakes meet ambiguous criteria. Strategic pivots, ethical calls, crisis response. AI might provide analysis or feedback, but the final call requires human judgment, especially when the risk is too high, or human values and ethics are in play.

Where I think organizations struggle most is misunderstanding that they need a partnership model in the first place. They treat Exploration work like Automation, expecting AI to operate autonomously where human partnership would yield better results. Or they keep Command work entirely manual when AI analysis could enhance human decision-making. They miss the reality that every job going forward will probably be a partnership between humans and AI.

Beyond the Grid: Why Human Context Still Rules

We need to take this partnership approach and be a part of all AI decision-making simply because it operates without human context. AI lacks the accumulated judgment, organizational history, and implicit knowledge and inherent emotion that shapes how decisions actually get made.

This connects directly to what I’ve explored in “Context Is What Holds the System Together.” Context is the frame showing what happened, where data came from, and why it matters. AI processes data efficiently, but preserving and interpreting meaning remains fundamentally human work. When information flows through systems, we need meaning to travel alongside data.

Without deliberate design for context preservation, AI defaults to statistical proxies. These can be technically correct while missing the point entirely. We see this in pricing algorithms that optimize revenue while eroding customer trust, or hiring systems that optimize for “fit” based on communication patterns, gradually narrowing the diversity of thought in teams.

In “Leading Products You Don’t Fully Control,” I discussed how we’re creating three-way relationships between product teams, AI systems, and users. When AI makes reasonable but wrong suggestions, the accountability lines blur. Who’s responsible? How do we make capabilities and limitations transparent? These questions matter because AI’s convincing nature makes it hard to spot when it’s wrong.

Metrics and Models: How Success Looks Different in Each Zone

Each zone requires fundamentally different optimization targets, reflecting the nature of the decisions being made.

In Automation, we optimize for efficiency where speed and accuracy matter most. But in Augmentation, success looks like expanded thinking and broader possibilities.

For Exploration work, we need to assess our understanding of the problem space. Are we catching edge cases? Meanwhile, Command decisions require metrics around judgment quality, beyond the decision being made to whether it will hold up when contexts shift.

What this all means is that measuring everything by efficiency metrics will eventually push AI work toward automation, regardless of whether that serves human needs.

Leadership Reframed: From Control to Condition-Setting

This framework builds on a thread running through much of my work: designing environments that support sound, scalable decision-making. As AI handles more routine decisions, leadership becomes about shaping the environment where they happen.

In “Fewer Reports, More Impact,” I argued that influence won’t come from the number of people reporting to you, but from how your decisions shape the entire system. The Partnership Matrix gives this idea some structure, showing how to design decision-making that guides both human and AI choices.

A few potential starting points for discussion with your teams:

- Review recent decisions across the four zones: were expectations clear about AI’s role versus human judgment?

- Look for where an implementation might have moved from Automation to the Command zone unintentionally. Didyou choose speed override discernment?

- Ask whether your metrics reinforce the right decision-making behavior. Remember, efficiency without quality is just a way of failing faster.

Most importantly, always ask who is accountable for the decision. The answer to this question will usually tell you if the right structure is in place.

Building Better Human-AI Partnerships

Throughout this series on AI and product leadership, I’ve been circling around a central tension: how do we maintain what’s essentially human while leveraging machine capabilities? The Partnership Matrix offers one answer: by being intentional and deliberate about which decisions need human judgment, context, and accountability. The winners in this race will probably be the ones who actually understand how to create partnerships where both human creativity and AI’s analytical power reinforce each other. Teams that design these partnerships can avoid missteps while they accelerate insight, unlock new capabilities, and make better long-term bets.

That should inspire urgency in you. Because right now, in conference rooms everywhere, teams are designing their human-AI partnerships without understanding the stakes. Most critically, they’re missing the human element that makes the whole system work in the first place. Humans bring essential ingredients for better answers, such as context,judgment, accountability, and the ability to navigate ambiguity.

Every organization faces a simple but profound question: How do we design partnerships that amplify both human and machine strengths? Get this wrong, and you’ll build an efficient machine that misses the magic of true collaboration. Get it right, and you’ll create an organization where humans and AI each contribute what they do best in service of outcomes neither could achieve alone.

I think we are all still learning what this looks like in practice. But I’ve come to believe that the most important leadership skill for the next decade won’t be understanding how to deploy AI’s capabilities. Instead, leaders will need to understand how to orchestrate partnerships that bring out the best in both human and machine intelligence, with each contributing toward outcomes neither could achieve alone.

We’re not dividing up the work. We’re learning to dance together, one partnership choice at a time.