Stop Tweaking Your Prompts: Start Managing Your AI Teammate

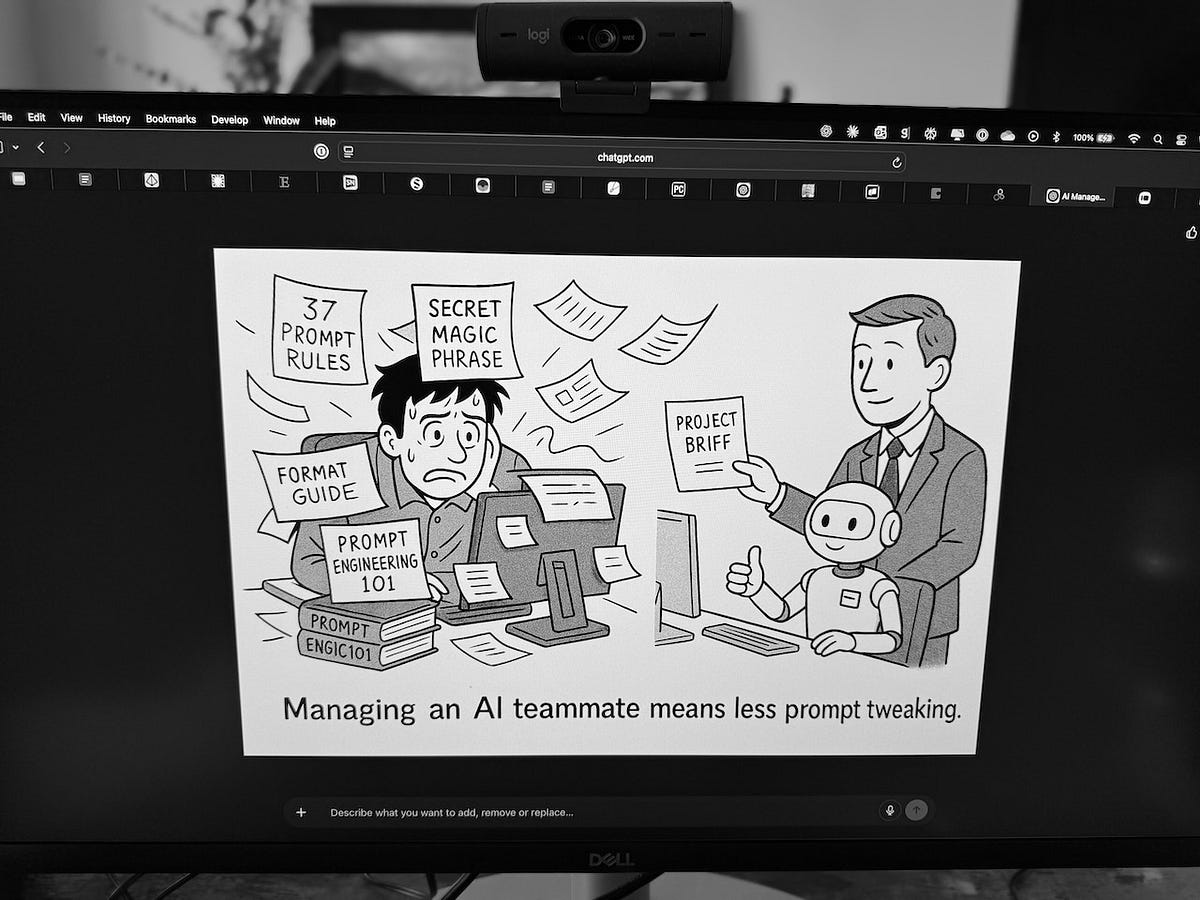

There is a general obsession with prompt engineering when working with AI. Endless posts and articles about the perfect format, the magic phrases, the secret techniques that make AI work like a marionette of some sort. But I find that’s not the right way to think about working with AI.

After a year of using these chatbots every day, I’ve found it is far simpler to work with AI by just managing it like you would a person. Just like typing in the 80s, surfing the web 90s, and using mobile devices in the 00’s, chatting with an AI will become an essential skill for all of us.

Why My Management Experience Made AI Click

I stopped worrying about prompting and started managing AI like a teammate, and everything clicked. Instead of memorizing formats and tweaking parameters, I apply the same skills that make any working relationship successful: clear communication, appropriate context, and knowing when to guide versus when to let them run.

I’ve never mastered any specific prompting technique. I’ve tried different frameworks, but I get better results by treating AI like an employee or mentee who needs active management. I coach it constantly, provide feedback on what works, point out inaccuracies (and sometimes straight-up falsehoods), and push for better output.

This approach came naturally because I’ve been managing people for most of my career. The repetition of daily AI conversations taught me what works, not unlike learning each team member’s communication style. To be clear: I’m not saying AI is human. It’s emphatically not. But it uses our primary interface, language, and the skills that make human communication effective transfer surprisingly well to AI.

Three Management Habits That Work on AI

There are many habits I’ve developed over the years to effectively manage teams and leaders, but as I work with AI I find these the most important and useful:

The verification habit: Working with AI reminds me of working with smart but inexperienced team members that sometimes confuse confidence with accuracy. AI delivers its responses with perfect conviction, whether it’s a spot-on analysis or complete fabrication (and honestly, I’ve been taken by AI’s confidence more times than I’d like to admit). What helps me is the same approach I use with human teams: asking follow-up questions to understand the reasoning. When AI shares something about user behavior or market trends, I push for specifics: ‘What data supports that?’ or ‘Break that down for me.’ I’m not asking it to explain its reasoning because it can’t really do that (see Extended Chinese Room Thought Experiment). I’m forcing it to be concrete instead of general. When AI has to get specific, the fabrications usually become obvious, and the valid insights get clearer. Being genuinely curious leads to better outcomes whether you’re working with humans or machines.

Giving the right amount of context: I’ll ask what seems like a simple question and get garbage, then add one clarifying sentence or example and suddenly it’s brilliant. Through repeated use, you learn what context the AI needs and when to provide it. Just as some team members need the full strategic picture while others need clear next steps, your AI operates much the same way. Making this an effective partnership means being clearer about what I want because I can’t rely on the AI to fill in the gaps through intuition or shared experience.

Knowing when and how to delegate: After enough conversations, you start seeing what AI is good at (synthesis, analysis, first drafts) and where it falls flat (genuine creativity, understanding nuance, knowing when it’s wrong), and this intuition only comes from hours of back-and-forth experimentation. Through that repetition I’ve developed the same delegation awareness I use with human teams, that sense of knowing what to hand off and what to keep, though with AI the boundaries are different, and always require me to at least review the output.

Managing Your Way to Better Prompts

Once you see the connection, you realize that getting good results from AI uses the same techniques that get good results from teams:

The project brief approach: Just like kicking off a project, I’ve learned to start complex AI requests with context, constraints, and success criteria. Instead of “write me a blog post,” I’ll say “I need an 800-word blog post for product managers at enterprise companies, focusing on AI adoption challenges, with a practical but not overly technical tone.” It’s very similar to a brief I’d give a contractor or new team member.

Progressive disclosure: When tackling complex problems, I break them down just like I would when delegating to a junior team member. Start with the big picture, get alignment, then drill into specifics. “Let’s design a user onboarding flow. First, what are the key steps new users need to complete?” Then gradually add constraints and requirements as we build understanding together.

Working sessions, not one-shot commands: Outside of search requests in Perplexity, I run working sessions with AI. Iterating, refining, and course-correcting all mirrors how I work with teams on complex problems, where we collaborate iteratively toward an answer.

Structured check-ins: Just as I’d check progress at checkpoints through a project, I’ll take a moment halfway through our collaboration to ask: “How’s this looking so far? What challenges are you seeing?” This keeps us aligned while creating opportunities to redirect before we’re too far down the wrong path.

We’re not discovering new techniques so much as rediscovering fundamental truths about coordination between members of the team, and it turns out these principles hold whether they are silicon or carbon-based.

Even AI Thinks Like a Manager

The newest AI models, like OpenAI’s o3 series, make this parallel explicit. When you peek inside their reasoning chains (which I highly recommend), you see something like this:

“Wait, what are they really asking for?” cycles through their processing, followed by “What constraints do I need to consider?” before they settle on “Let me break this into steps…” and often loop back with “Actually, let me reconsider that approach…”

This feels a lot like the internal monologue I have when an executive drops a complex request to me in email or Slack. The same iterative process of understanding, constraining, planning, and reconsidering. The models are implementing structured thinking processes that managers use intuitively, whether it’s Toyota’s “Stop and Think,” military OODA loops, or any other framework we’ve learned over time that helps us make decisions under uncertainty. AI companies have essentially built management thinking into the architecture itself.

Stop Learning Prompts, Start Managing Your AI

If you’re a manager feeling left behind by the AI revolution, I have good news: you already have the skills that matter most. You just need to apply them.

Tomorrow, try approaching your next AI interaction like you would a new team member. Brief it clearly. Check its understanding. Coach and guide it through the work. I bet you’ll see immediate improvement.

The future needs both technical expertise and management skills. You might have more of the right skills than you think.