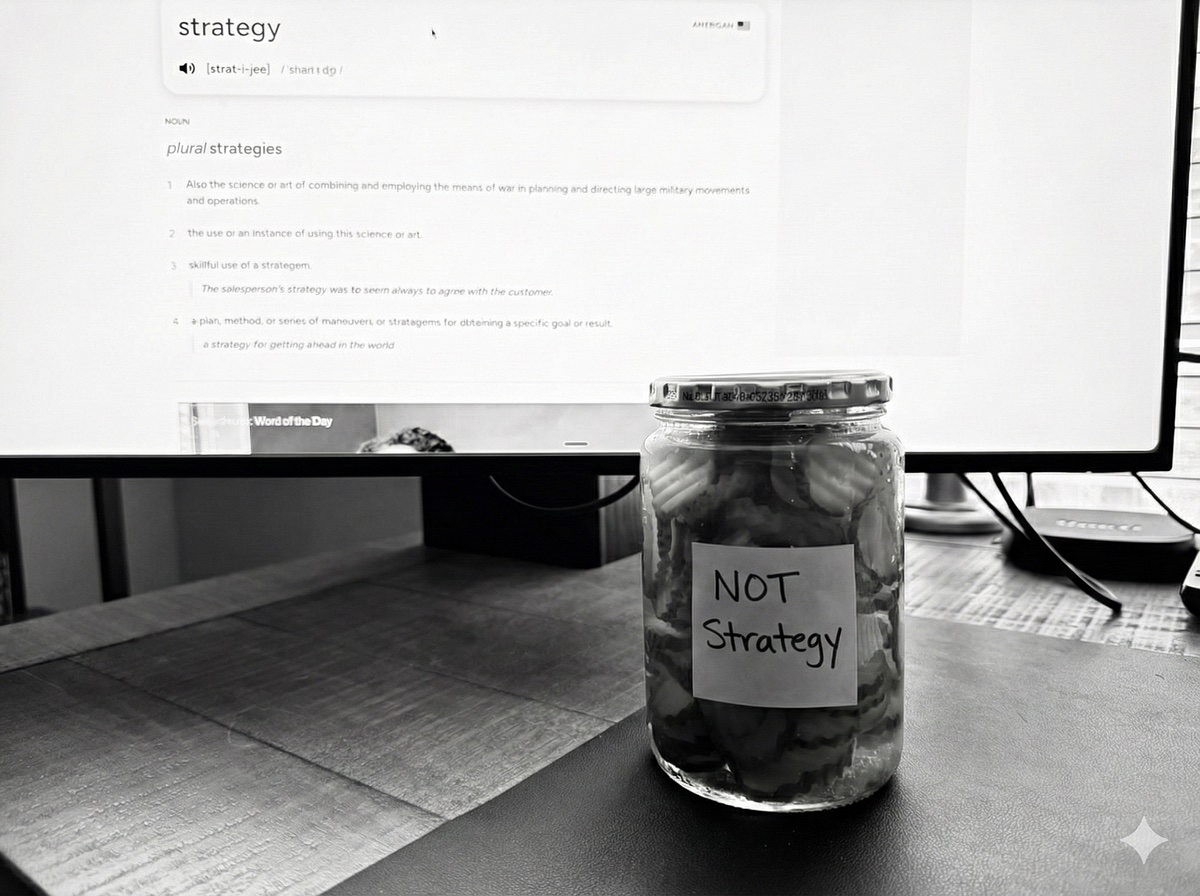

Pickles and Strategy: Why Collaborative Thinking with AI is Hard Work

The other day I asked Perplexity whether old pickles can start tasting funny. Within the same hour, I’d also asked why conversations with frontier models seem to create polarized answers and struggle with nuance.

Both questions got three confident, well-reasoned paragraphs. My tendency (and others like me when presented with a confident answer) has been to accept both with about the same level of scrutiny. But, while one of those questions had a simple answer, the other one…shouldn’t.

My son has started to heckle me for how often I turn to AI (last week it was finding the best moisturizing body wash at Walmart). And he’s not wrong. I use these tools constantly, for everything from the trivial to the strategic. But the “pickle moment” made clear something I’ve been feeling for a while now as I constantly chatter away with my AI friends, they really want to get to an answer, even if that’s not what I’m looking for.

The Machine Wants a Decision

A few years ago at Charter, I led the product team that consolidated over forty legacy customer systems. A core problem was figuring out which records belonged to the same customer across those disparate systems. Same name but different address? Different name but same phone number? The team built and constantly refined pattern matching algorithms that worked to resolve those options into an answer. It was called “discriminative AI”. Feed the machine data, it finds correlations, and uses percentages to make decisions. The whole point of this approach is to get from ambiguity to an answer.

Generative AI inherited this tendency. LLMs are trained on vast amounts of data, but they’re also trained to get to a resolution. When Claude says “here are three options”, that isn’t a UX decision some designer made, it’s representative of how the system thinks. When you ask which approach is better, it picks one. The inherent structure of systems, driven by probability, is to push toward giving you something that feels like an answer even when the question deserved to stay open longer.

When AI Was Just a Better Search Engine

A year ago, I was treating AI like a search engine that wrote paragraphs. I’d ask something like “What are the top skills employers look for in product leaders?” and get back a numbered list with explanations. Communication. Strategic thinking. Stakeholder management. Data-driven decision making. I’d read it, think “that seems right,” and move on to figuring out how to demonstrate those skills.

Or I’d ask for help with positioning statements for my consulting practice and get back three options, each with a slightly different angle, each sounding plausible. I’d pick whichever one resonated most and paste it into my website draft.

Ask, receive, use. That was the whole interaction model. The AI would sometimes end with “Would you like me to elaborate on any of these?” and I’d usually say no, because what I had felt sufficient. I was getting answers. The answers seemed good and I was ready to move on.

When the Answers Are Too Easy

Hundreds of hours of conversation changed how I use AI (and I expect it will change again hundreds of hours into the future). I started noticing my growing dissatisfaction with the outputs I was getting. Sometimes the answer came too fast or too easily. I’d accept an answer, start moving forward with it, and then start questioning it when the answer revealed more questions.

Sometimes what I actually needed was to explore the decision space, not resolve it. To understand the shape of the problem before picking a direction. But AI is almost compelled to get you to choose a path. “I don’t know” isn’t an answer AI can give you, because it’s not an answer. It’s uncertainty, and the system isn’t designed for that.

“Hold On, That’s Not Quite Right”

Over the past few months I’ve developed some specific habits, to deal with this. All of them are about noticing when the system is pushing toward a resolution on something before I’m ready to.

“Hold on” is about timing. Mid-conversation, when I feel that sense of closure starting to settle, I’ll interrupt with “wait, let’s back up” or “hold on, I think we’re missing something.” That’s both for me and the AI, to tell us we need to keep the question alive a little longer because there is more definition/discovery/thought/context needed before we wrap it up.

“That’s not quite right” is about framing. AI will often reflect back a cleaner version of what I said, and sometimes that cleaning process loses something important. When the framing doesn’t match the actual complexity of what I’m thinking through, I push back. Not because the AI is wrong exactly, but because it isn’t articulating my thinking clearly.

“Why not both” has become almost a reflex. When AI presents options as alternatives, I often find myself responding “I’m doing both” or “those aren’t mutually exclusive.” That’s an AND answer to an OR question, and I have to keep asserting it because the system keeps trying to collapse it back into a choice.

The hardest habit is the classic problem with any critical thinking exercise. Sitting with discomfort is tough, but it’s even tougher when there’s a bright shiny answer sitting in front of you that you know came too easily. When I ask something I know is actually complex and get back a response that feels complete, I know it’s time to keep pushing. The AI might be right, but I don’t agree yet so I need to keep going back and forth with it until I get there. Having an answer isn’t the same as having done the thinking.

I’m Not Done Figuring This Out

This isn’t the same as “be critical of AI outputs.” That framing is too adversarial, like you’re trying to catch mistakes. And dismissing AI entirely misses how genuinely useful it is for a huge range of tasks. What I’ve ended up with is something more like a collaboration practice with a system that has known tendencies. I’ve learned how it’s likely to respond, and I’ve adapted my conversational patterns to compensate. I’m not sure I’d call where I’ve landed “skilled” so much as “less naive than I used to be.”

I’m not claiming I’ve figured this out by any stretch. I still catch myself accepting an answer too fast, still find myself three steps down a path before I realize I skipped something important, still have to remind myself that an answer coming too quickly is suspicious.

But I’m much faster at noticing than I was a year ago. The discomfort of holding tension, of not having an answer, of staying with a question longer than the system wants me to, has become a familiar place. Not comfortable, but tolerable. Because that discomfort is usually where the real answer lives, if there’s a real answer at all.

The pickle question had a real answer. The epistemology question might not. Learning to tell the difference, and to hold that uncertainty without rushing to resolve it, is probably the most valuable thing a year of AI collaboration has taught me.